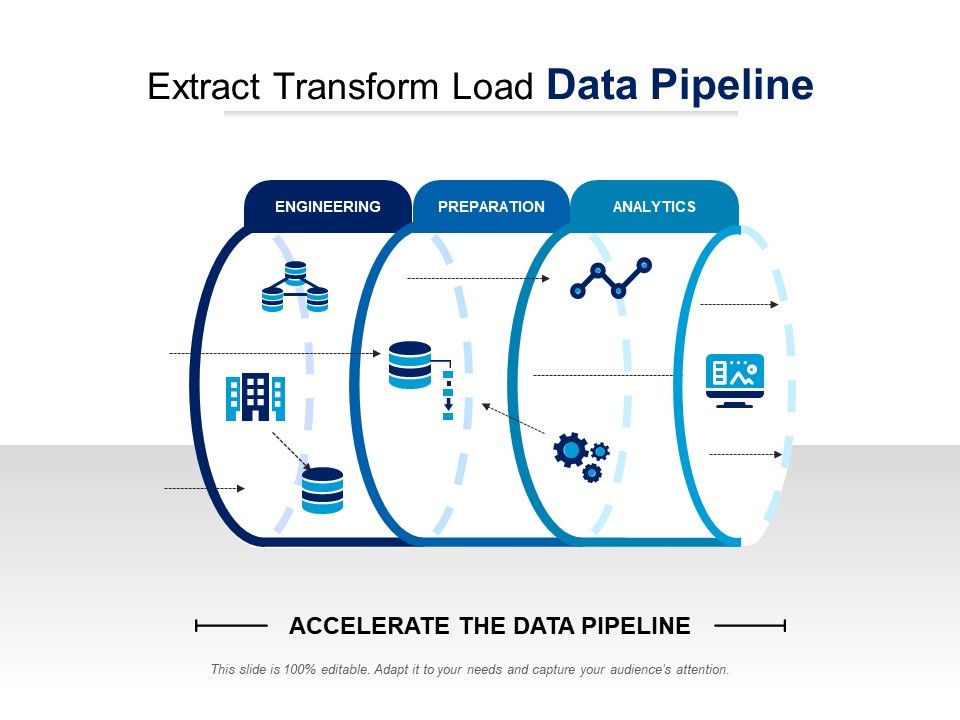

Data extract transform load3/24/2024  In the mobile application ecosystem, most dashboards are built on data integrated through the ETL processes. What is an example of an ETL process?Īll data that is pulled from different sources goes through an ETL process. It is a terminology used in data pipeline management and data engineering, where data from different sources in different formats is extracted and transformed into one format so that it is seamlessly integrated and loaded into a single database or warehouse. Seamless integration with a real-time event capture and data integration solution enables real-time ETL by combining real-time source data integration with automated ETL generation-and supports a wide ecosystem of heterogeneous data sources including relational, legacy, and NoSQL data stores.ETL stands for Extract Transform Load. ETL automation tools automatically generate the ETL commands, data warehouse structures, and documentation necessary for designing, building, and maintaining your data warehouse program, helping you save time, reduce cost, and reduce project risk. New, agile data warehouse automation and transformation platforms eliminate the need for conventional ETL tools by automating repetitive, labor-intensive tasks associated with ETL integration and data warehousing.ĮTL automation frees you from error-prone manual coding and it automates the entire data warehousing lifecycle from design and development to impact analysis and change management.

As a result, building data warehouses with ETL tools can be time-consuming, cumbersome, and error-prone - introducing delays and unnecessary risk into BI projects that require the most up-to-date data, and the agility to react quickly to changing business demands. Learn more about ETL tools and different approaches to solve this challenge:īuilding and maintaining a data warehouse can require hundreds or thousands of ETL tool programs. Streaming ETL tools, both commercial and open source, offer this capability. This requires organizations to process data in real time, with a distributed model and streaming capabilities. Today’s business demands real-time access to data. Plus, it can be tough to get support for open source tools. However, some open source tools only support one stage of the process, such as extracting data, and some are not designed to handle data complexities or change data capture (CDC). Open source tools such as Apache Kafka offer a low-cost alternative to commercial ETL tools. They then use the power and scale of the cloud to transform the data.

Cloud-native ETL tools can extract and load data from sources directly into a cloud data warehouse. Today’s ETL tools can still do batch processing, but since they’re often cloud-based, they’re less constrained in terms of when and how quickly the processing occurs.Ĭloud-Native.

In the past, processing large data sets impacted an organization’s computing power and so these processes were performed in batches during off-hours. There are four primary types of ETL tools:īatch Processing: Traditionally, on-premises batch processing was the primary ETL process. Watch the brief video below to learn why the market is shifting toward ELT. See a side-by-side review of 10 key areas in the ETL vs ELT Comparison Matrix. This is why the ELT process is more appropriate for larger, structured and unstructured data sets and when timeliness is important. But if there is not sufficient processing power in the cloud solution, transformation can slow down the querying and analysis processes. This means that the ELT process takes less time. In the ELT process, data transformation is performed on an as-needed basis within the target system. This is why this process is appropriate for small data sets which require complex transformations. The benefit is that analysis can take place immediately once the data is loaded. The entire data set must be transformed before loading, so transforming large data sets can take a lot of time up front. In the ETL process, transformation is performed in a staging area outside of the data warehouse and before loading it into the data warehouse. For larger, unstructured data sets and when timeliness is important, the ELT process is more appropriate. The ETL process is most appropriate for small data sets which require complex transformations.

Many organizations use both processes to cover their wide range of data pipeline needs. The key difference between the two processes is when the transformation of the data occurs.

0 Comments

Leave a Reply.AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed